Multi-target detection of underground personnel based on an improved YOLOv8n model

-

摘要:

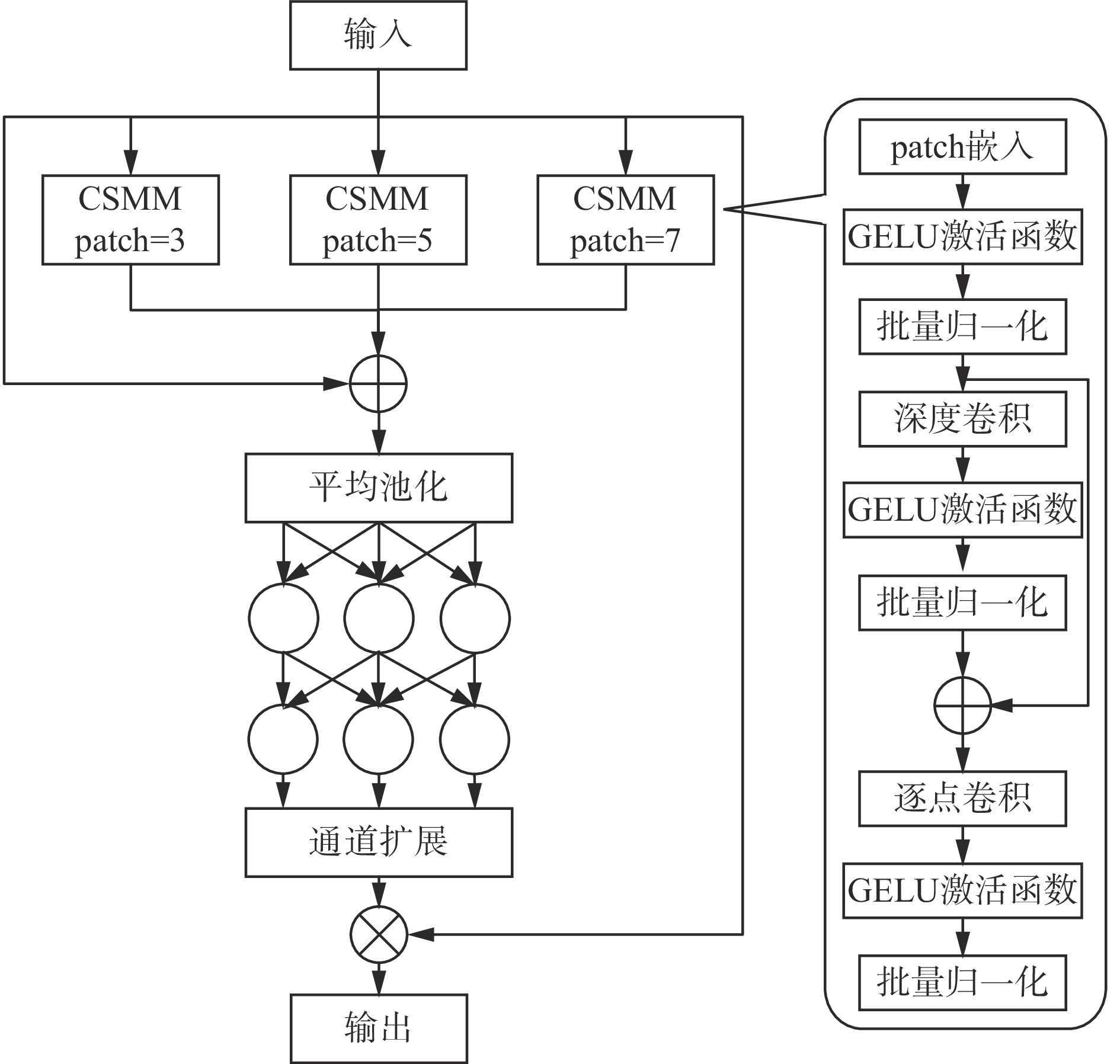

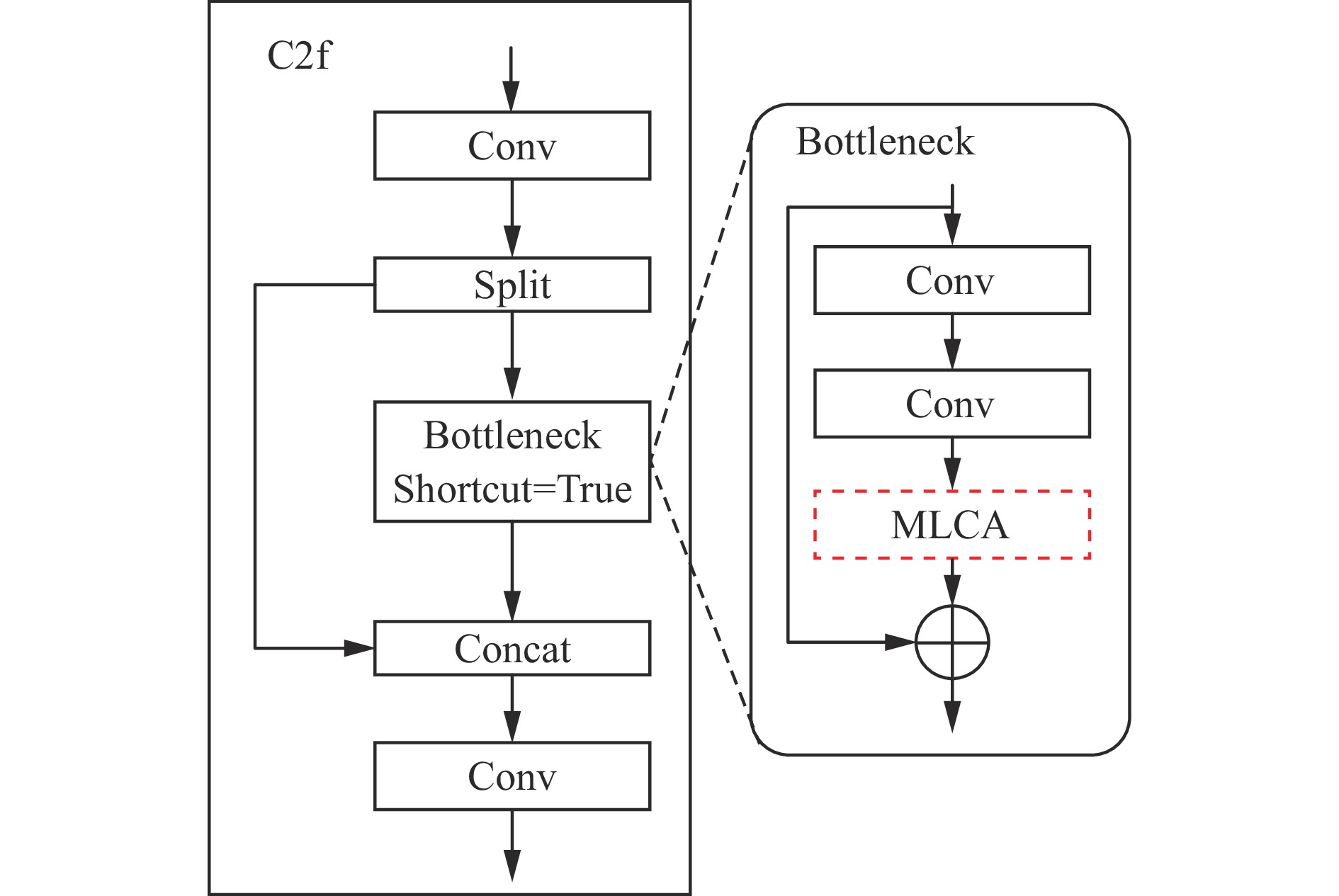

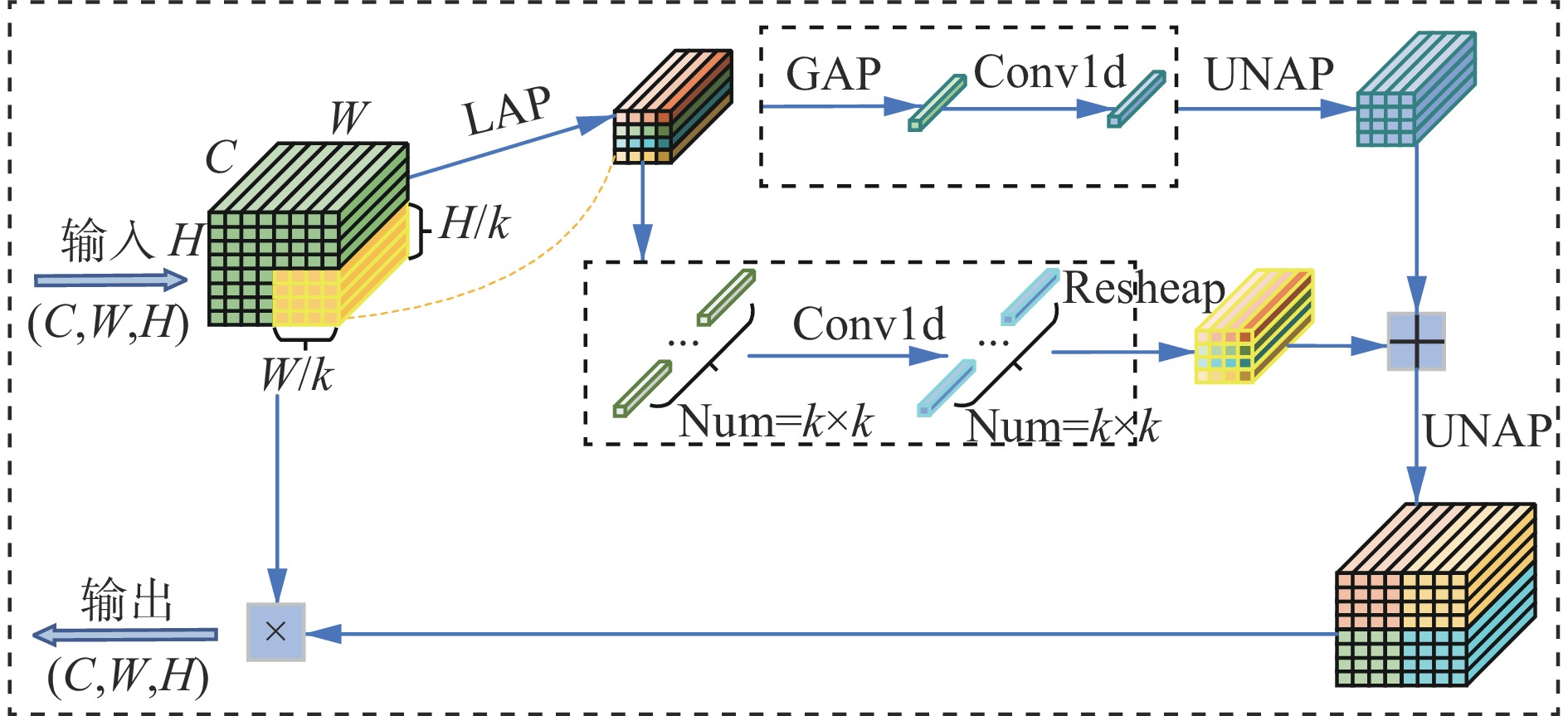

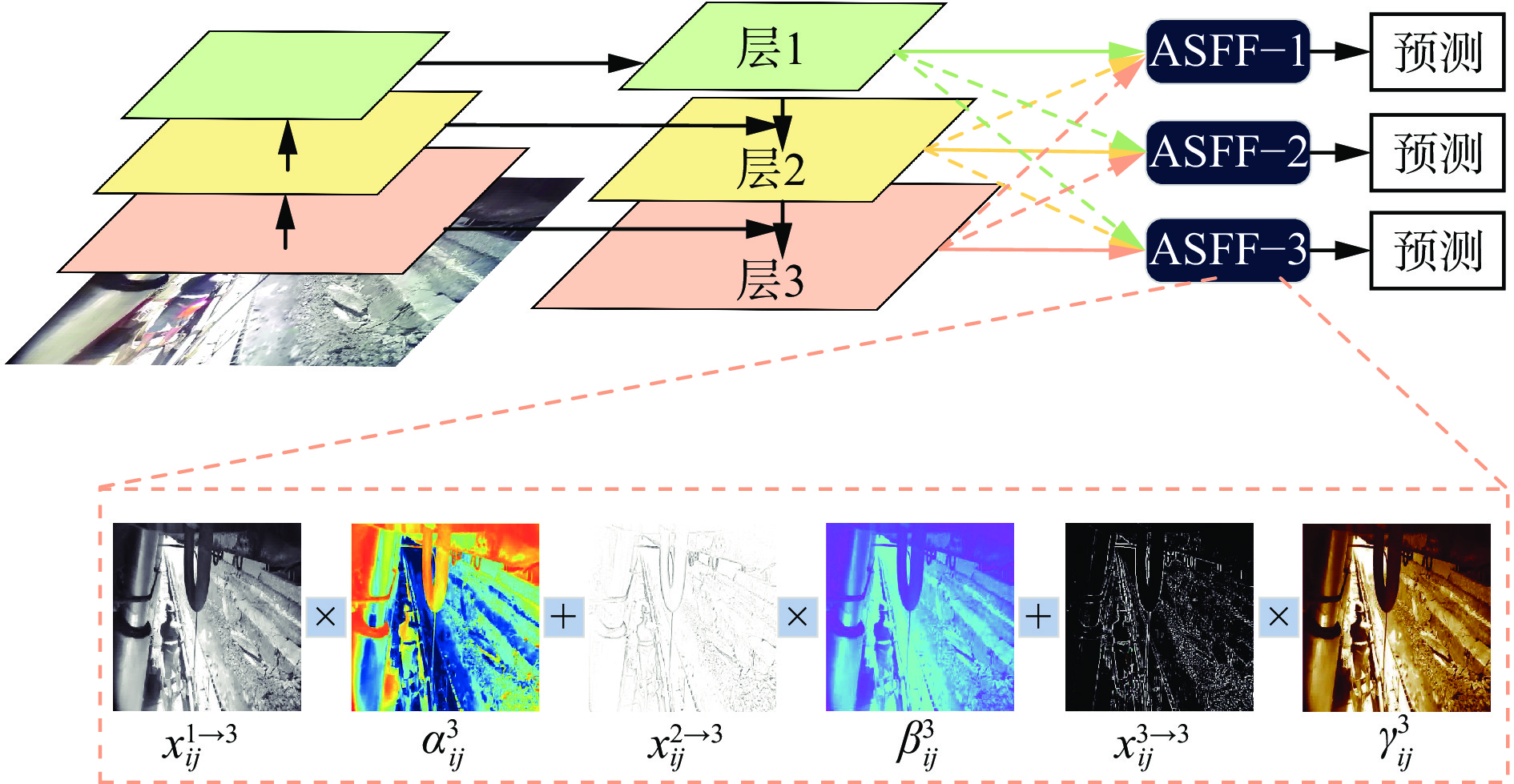

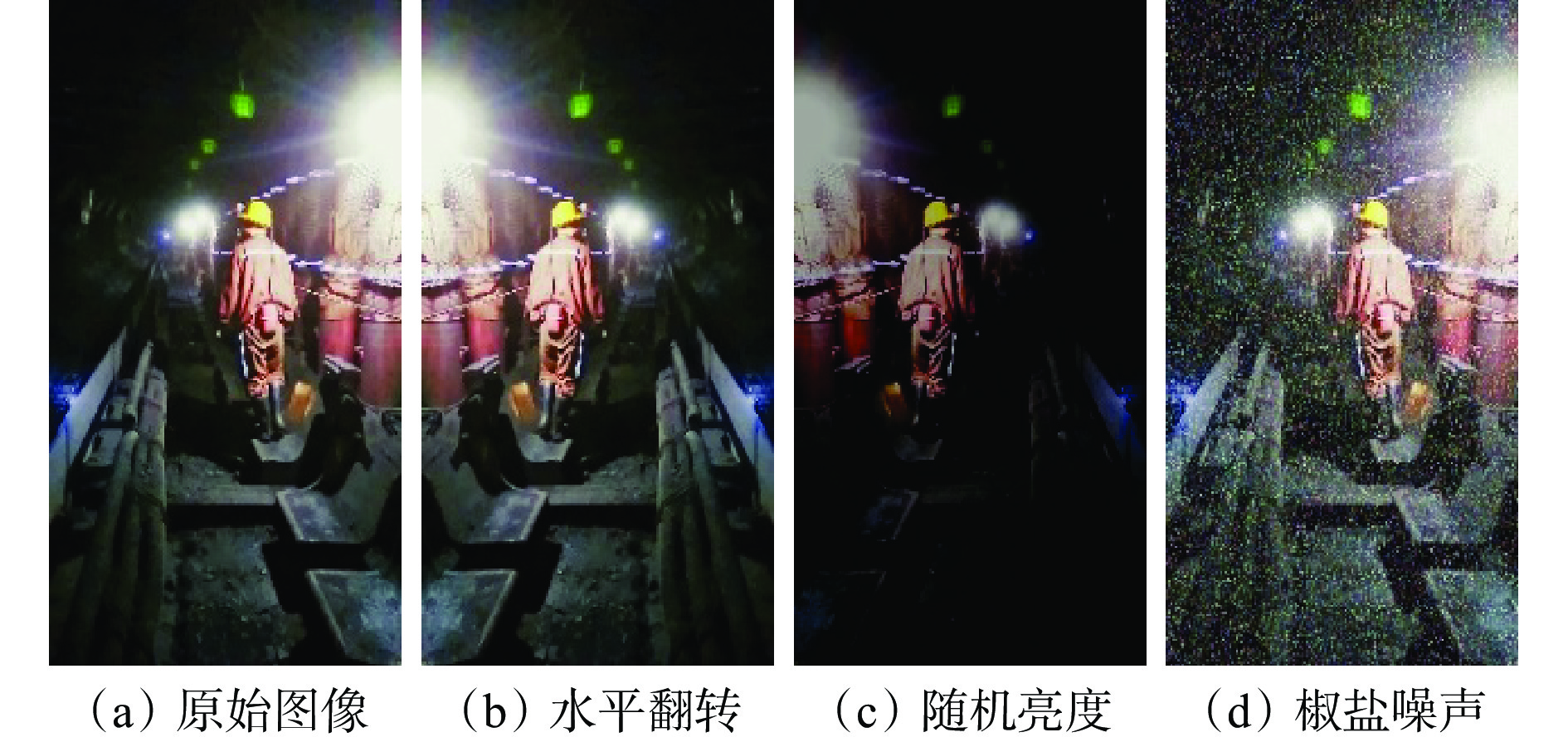

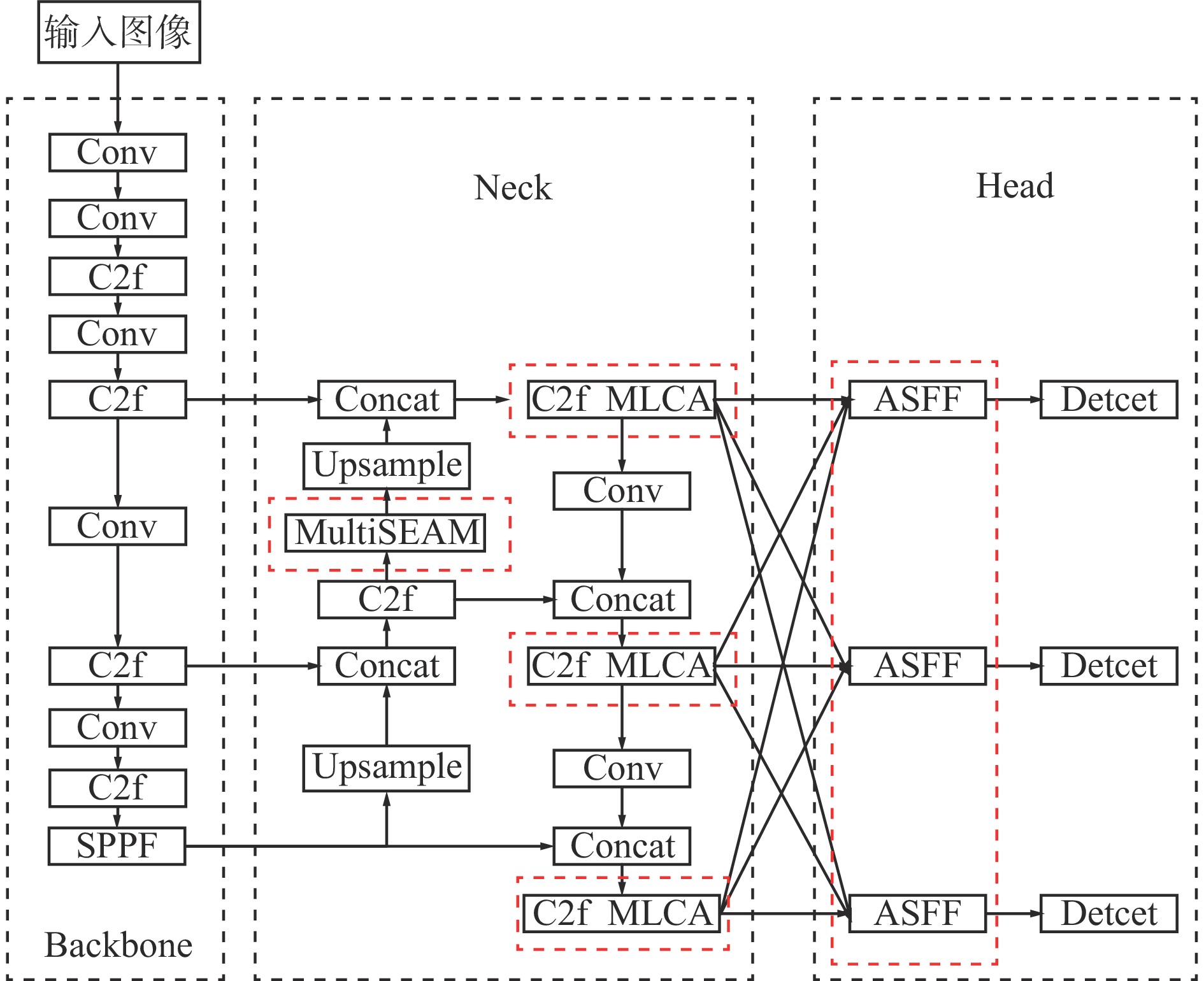

针对井下危险区域人员监测视频存在光照不均匀、目标尺度不一致、遮挡等复杂情况,基于YOLOv8n网络结构,提出一种改进的井下人员多目标检测算法—YOLOv8n−MSMLAS。该算法对YOLOv8n的Neck层进行改进,添加多尺度空间增强注意力机制(MultiSEAM),以增强对遮挡目标的检测性能;在C2f模块中引入混合局部通道注意力(MLCA)机制,构建C2f−MLCA模块,以融合局部和全局特征信息,提高特征表达能力;在Head层检测头中嵌入自适应空间特征融合(ASFF)模块,以增强对小尺度目标的检测性能。实验结果表明:① 与Faster R−CNN,SSD,RT−DETR,YOLOv5s,YOLOv7等主流模型相比,YOLOv8n−MSMLAS综合性能表现最佳,mAP@0.5和mAP@0.5:0.95分别达到93.4%和60.1%,FPS为80.0帧/s,参数量为5.80×106个,较好平衡了模型的检测精度和复杂度。② YOLOv8n−MSMLAS在光照不均、目标尺度不一致、遮挡等条件下表现出较好的检测性能,适用于现场检测。

-

关键词:

- 煤矿井下危险区域 /

- 井下人员多目标检测 /

- YOLOv8n /

- 多尺度空间增强注意力机制 /

- 自适应空间特征融合 /

- 轻量化混合局部通道注意力机制

Abstract:This study aims to address the complex challenges in monitoring underground personnel in hazardous areas, including uneven lighting, target scale inconsistency, and occlusion. An innovative multi-target detection algorithm, YOLOv8n-MSMLAS, was proposed based on the YOLOv8n network structure. The algorithm modified the Neck layer by incorporating a Multi-Scale Spatially Enhanced Attention Mechanism (MultiSEAM) to enhance the detection of occluded targets. Furthermore, a Hybrid Local Channel Attention (MLCA) mechanism was introduced into the C2f module to create the C2f-MLCA module, which fused local and global feature information, thereby improving feature representation. An Adaptive Spatial Feature Fusion (ASFF) module was embedded in the Head layer to boost detection performance for small-scale targets. Experimental results demonstrated that YOLOv8n-ASAM outperformed mainstream models such as Faster R-CNN, SSD, RT-DETR, YOLOv5s, and YOLOv7 in terms of overall performance, achieving mAP@0.5 and mAP@0.5: 0.95 of 93.4% and 60.1%, respectively,with a speed of 80.0 frames per second,the parameter is 5.80×106, effectively balancing accuracy and complexity. Moreover, YOLOv8n-ASAM exhibited superior performance under uneven lighting, target scale inconsistency, and occlusion, making it well-suited for real-world applications.

-

-

表 1 环境配置参数

Table 1 Environmental configuration parameters

环境 配置参数 CPU 12th Gen Intel(R) Core(TM) i7−12650H GPU RTX 3030 (24 GiB)运行环境 Python3.9,CUDA 11.8 深度学习框架 Pytorch 1.12.1 编程语言 Python 3.9.7 表 2 消融实验结果

Table 2 Ablation experiment results

模型 MLCA MultiSEAM ASFF 准确率/% 召回率/% mAP@0.5/% mAP@0.5:0.95/% FPS/(帧·s−1) 参数量/106个 YOLOv8n × × × 91.7 87.2 92.0 59.0 128.2 3.01 改进模型1 √ × × 93.7 86.3 92.9 59.1 109.9 3.01 改进模型2 × √ × 96.5 86.2 92.5 58.4 108.7 4.42 改进模型3 × × √ 95.6 88.8 93.4 58.9 104.2 4.38 改进模型4 √ √ × 95.3 89.6 93.4 58.3 101.1 4.43 改进模型5 √ × √ 95.8 85.8 92.9 56.5 91.7 4.38 改进模型6 × √ √ 96.2 89.0 92.6 59.1 88.5 5.80 改进模型7 √ √ √ 97.0 87.3 93.4 60.1 80.0 5.80 表 3 对比实验结果

Table 3 Comparison experiment results

模型 mAP@0.5/% mAP@0.5:0.95/% FPS/(帧·s−1) 参数量/106个 Faster R−CNN 91.9 52.7 71.6 137.10 SSD 91.5 55.7 95.1 26.29 RT−DETR 93.3 59.0 88.5 19.87 YOLOv5s 92.7 59.2 109.9 9.11 YOLOv7 93.2 53.5 59.5 36.48 YOLOv8n 92.0 59.0 128.2 3.01 YOLOv8s 93.4 59.9 120.5 11.13 YOLOv8n−MSMLAS 93.4 60.1 80.0 5.80 -

[1] GIRSHICK R,DONAHUE J,DARRELL T,et al. Rich feature hierarchies for accurate object detection and semantic segmentation[C]. IEEE Conference on Computer Vision and Pattern Recognition,New York,2014:580-587.

[2] GIRSHICK R. Fast R-CNN[C]. IEEE International Conference on Computer Vision,Santiago,2015:1440-1448.

[3] REN Shaoqing,HE Kaiming,GIRSHICK R,et al. Faster R-CNN:towards real-time object detection with region proposal networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence,2017,39(6):1137-1149. DOI: 10.1109/TPAMI.2016.2577031

[4] LIU Wei,ANGUELOV D,ERHAN D,et al. SSD:single shot MultiBox detector[C]. European Conference on Computer Vision,Cham,2016:21-37.

[5] 王渊,郭卫,张传伟,等. 融合注意力机制和先验知识的刮板输送机异常煤块检测[J]. 西安科技大学学报,2023,43(1):192-200. WANG Yuan,GUO Wei,ZHANG Chuanwei,et al. Detection of abnormal coal block in scraper conveyor integrating attention mechanism and prior knowledge[J]. Journal of Xi'an University of Science and Technology,2023,43(1):192-200.

[6] 赵云辉,程小舟,董锴文,等. 基于MK−YOLOV4的矿区人员无标注视频检索方法[J]. 激光与光电子学进展,2022,59(4):301-309. ZHAO Yunhui,CHENG Xiaozhou,DONG Kaiwen,et al. Unlabeled video retrieval method of mining personnel based on MK-YOLOV4[J]. Laser & Optoelectronics Progress,2022,59(4):301-309.

[7] 谢斌红,袁帅,龚大立. 基于RDB−YOLOv4的煤矿井下有遮挡行人检测[J]. 计算机工程与应用,2022,58(5):200-207. DOI: 10.3778/j.issn.1002-8331.2009-0449 XIE Binhong,YUAN Shuai,GONG Dali. Detection of blocked pedestrians based on RDB-YOLOv4 in coal mine[J]. Computer Engineering and Applications,2022,58(5):200-207. DOI: 10.3778/j.issn.1002-8331.2009-0449

[8] 汝洪芳,王珂硕,王国新. 改进YOLOv4网络的煤矿井下行人检测算法[J]. 黑龙江科技大学学报,2022,32(4):557-562. DOI: 10.3969/j.issn.2095-7262.2022.04.023 RU Hongfang,WANG Keshuo,WANG Guoxin. Coal mine pedestrian detection algorithm based on improved YOLOv4 network[J]. Journal of Heilongjiang University of Science and Technology,2022,32(4):557-562. DOI: 10.3969/j.issn.2095-7262.2022.04.023

[9] LI Xiaoyu,WANG Shuai,LIU Bin,et al. Improved YOLOv4 network using infrared images for personnel detection in coal mines[J]. Journal of Electronic Imaging,2022,31. DOI: 10.1117/1.JEI.31.1.013017.

[10] YAO Wei,WANG Aiming,NIE Yifan,et al. Study on the recognition of coal miners' unsafe behavior and status in the hoist cage based on machine vision[J]. Sensors,2023,23(21). DOI: 10.3390/s23218794.

[11] 张磊,李熙尉,燕倩如,等. 基于改进YOLOv5s的综采工作面人员检测算法[J]. 中国安全科学学报,2023,33(7):82-89. ZHANG Lei,LI Xiwei,YAN Qianru,et al. Personnel detection algorithm in fully mechanized coal face based on improved YOLOv5s[J]. China Safety Science Journal,2023,33(7):82-89.

[12] 白培瑞,王瑞,刘庆一,等. DS−YOLOv5:一种实时的安全帽佩戴检测与识别模型[J]. 工程科学学报,2023,45(12):2108-2117. BAI Peirui,WANG Rui,LIU Qingyi,et al. DS-YOLOv5:a real-time detection and recognition model for helmet wearing[J]. Chinese Journal of Engineering,2023,45(12):2108-2117.

[13] 张辉,苏国用,赵东洋. 基于FBEC−YOLOv5s的采掘工作面多目标检测研究[J]. 工矿自动化,2023,49(11):39-45. ZHANG Hui,SU Guoyong,ZHAO Dongyang. Research on multi object detection in mining face based on FBEC-YOLOv5s[J]. Journal of Mine Automation,2023,49(11):39-45.

[14] SHAO Xiaoqiang,LIU Shibo,LI Xin,et al. FM-YOLOv7:an improved detection method for mine personnel helmet[J]. Journal of Electronic Imaging,2023,32(3). DOI: 10.1117/1.JEI.32.3.033013.

[15] 田子建,阳康,吴佳奇,等. 基于LMIENet图像增强的矿井下低光环境目标检测方法[J]. 煤炭科学技术,2024,52(5):222-235. DOI: 10.12438/cst.2023-0675 TIAN Zijian,YANG Kang,WU Jiaqi,et al. LMIENet enhanced object detection method for low light environment in underground mines[J]. Coal Science and Technology,2024,52(5):222-235. DOI: 10.12438/cst.2023-0675

[16] 薛小勇,何新宇,姚超修,等. 基于改进YOLOv8n的采掘工作面小目标检测方法[J]. 工矿自动化,2024,50(8):105-111. XUE Xiaoyong,HE Xinyu,YAO Chaoxiu,et al. Small object detection method for mining face based on improved YOLOv8n[J]. Journal of Mine Automation,2024,50(8):105-111.

[17] 肖振久,严肃,曲海成. 基于多重机制优化YOLOv8的复杂环境下安全帽检测方法[J]. 计算机工程与应用,2024,60(21):172-182. DOI: 10.3778/j.issn.1002-8331.2402-0147 XIAO Zhenjiu,YAN Su,QU Haicheng. Safety helmet detection method in complex environment based on multi-mechanism optimization of YOLOv8[J]. Computer Engineering and Applications,2024,60(21):172-182. DOI: 10.3778/j.issn.1002-8331.2402-0147

[18] FAN Yingbo,MAO Shanjun,LI Mei,et al. CM-YOLOv8:lightweight YOLO for coal mine fully mechanized mining face[J]. Sensors,2024,24(6). DOI: 10.3390/S24061866.

[19] SHAO Xiaoqiang,LIU Shibo,LI Xin,et al. Rep-YOLO:an efficient detection method for mine personnel[J]. Journal of Real-Time Image Processing,2024,21(2). DOI: 10.1007/S11554-023-01407-3.

[20] YU Ziping,HUANG Hongbo,CHEN Weijun,et al. YOLO-FaceV2:a scale and occlusion aware face detector[J]. Pattern Recognition,2024,155. DOI: 10.1016/J.PATCOG.2024.110714.

[21] WAN Dahang,LU Rongsheng,SHEN Siyuan,et al. Mixed local channel attention for object detection[J]. Engineering Applications of Artificial Intelligence,2023,123. DOI: 10.1016/J.ENGAPPAI.2023.106442.

[22] LIU Songtao,HUANG Di,WANG Yunhong. Learning spatial fusion for single-shot object detection[EB/OL]. (2024-08-22]. https://arxiv.org/abs/1911.09516v2.

[23] YANG Wenjuan,ZHANG Xuhui,MA Bing,et al. An open dataset for intelligent recognition and classification of abnormal condition in longwall mining[J]. Scientific Data,2023,10(1). DOI: 10.1038/S41597-023-02322-9.

下载:

下载: